VMware: Creating iSCSI network in vSphere ESXi 5.0

I was trying to configure my central storage (StarWind iSCSI SAN) in my homelab, but couldn’t find the software iSCSI Adapter.. huh.. it’s removed?

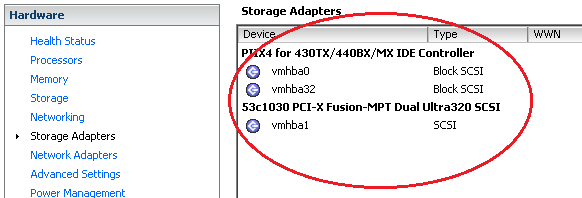

Storage Adapter:

Nope.. by default it’s not installed, you must manual add the adapter by clicking Configuration > Storage Adapter > Add Storage Adapter

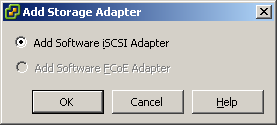

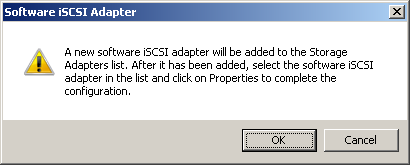

– Select: Add Software iSCSI Adapter

A new software iSCSI adapter will be added to the Storage Adapters list. After it has been added, select the software iSCSI adapter in the list and click on Properties to complete the configuration

![]()

Event: Change Software Internet SCSI Status = Completed

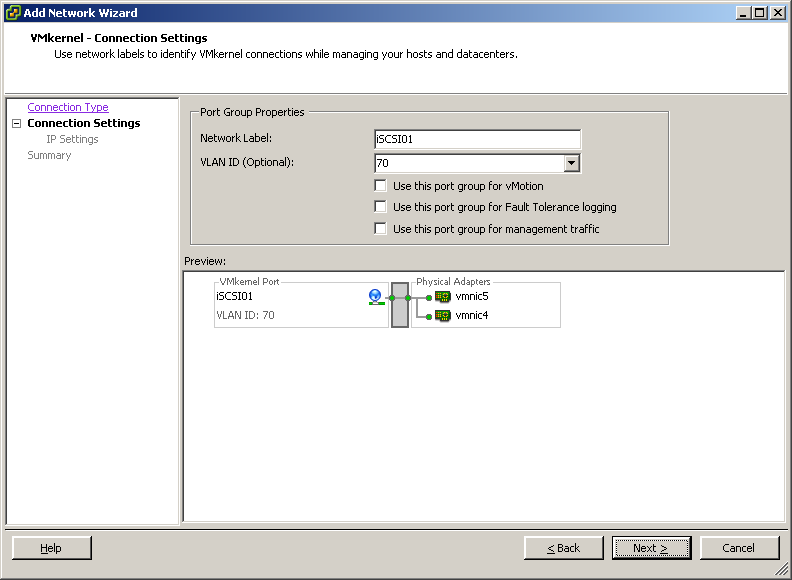

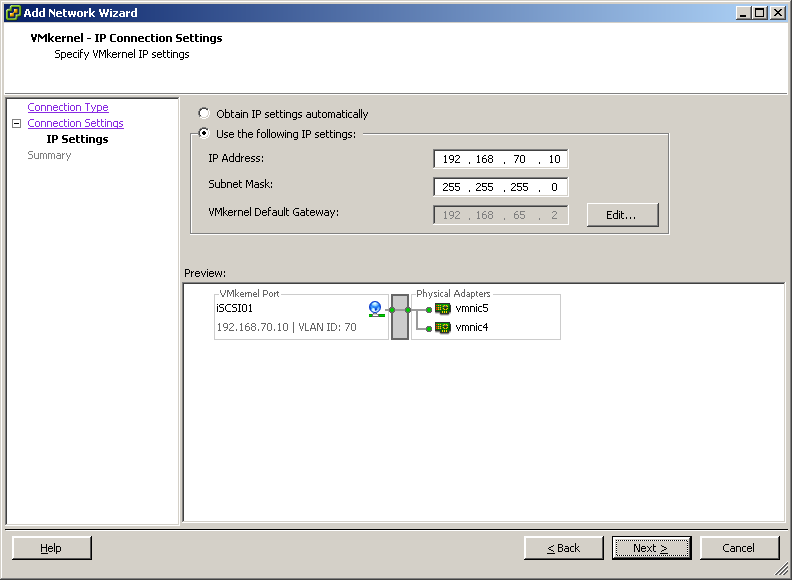

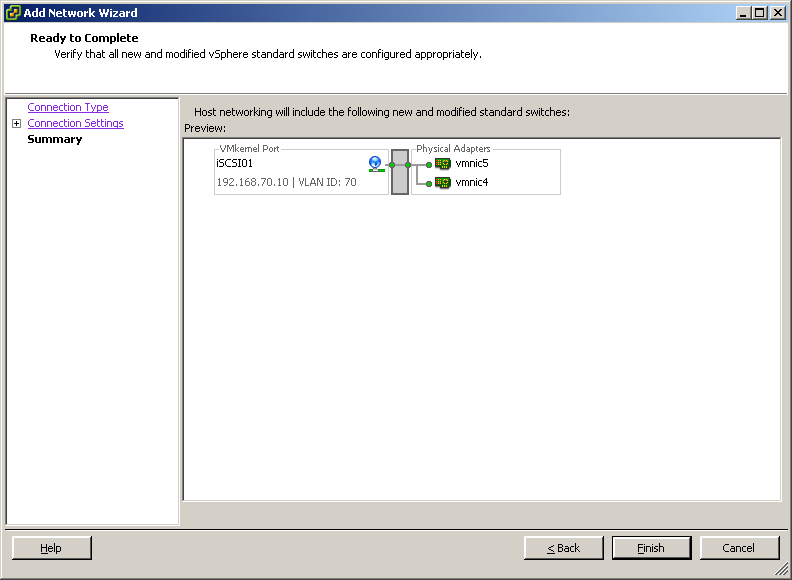

Create new vSwitch (in my case two VMkernel ports):

– Network label: iSCSI01

– Configure IP Address and Subnet Mask

..

– Finish the configuration, repeat this step to configure the 2nd iSCSI02 port

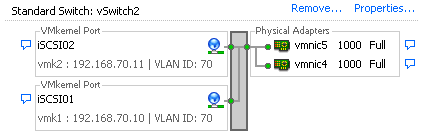

vSwitch2 result:

VMkernel – Override switch failover order:

Now we need to change the failover order for each VMkernel:

iSCSI01: Active Adapter VMNIC4 – Unused Adapter: VMNIC5

iSCSI02: Active Adapter VMNIC5 – Unused Adapter: VMNIC4

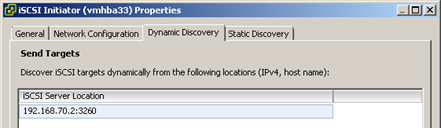

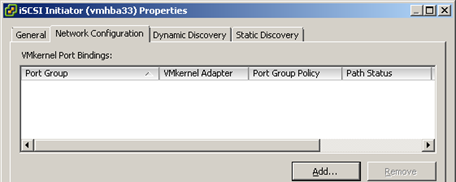

Configure Software iSCSI Initiator:

Go to the properties of the iSCSI initiator: Configuration > Storage Adapters > iSCSI Storage Adapter: vmhba** > Properties

And setup Dynamic Discovery – Sent Target location (in my case the StarWind VSA)

– Add target: 192.168.70.2:3260

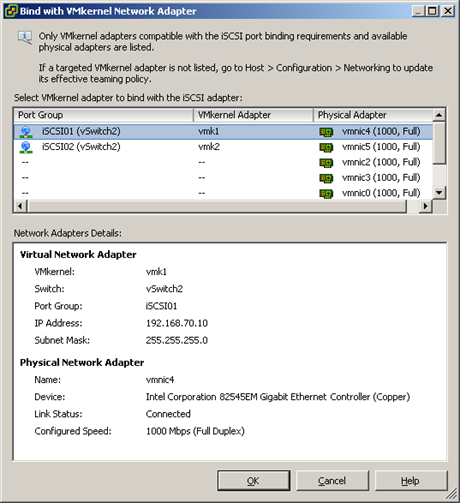

Next we need to bind a pNIC to the iSCSI VMkernel.. looong loooong time ago (vSphere 4) ..we need to configure this by CLI (esxcli swiscsi nic add -n vmk1 -d vmhba33) but now it’s possible to do this via the GUI.

When you go to tab: Network Configuration > Add..

– Click: Add..

– Now you can select a Physical NIC to bind the VMkernel for your iSCSI targets

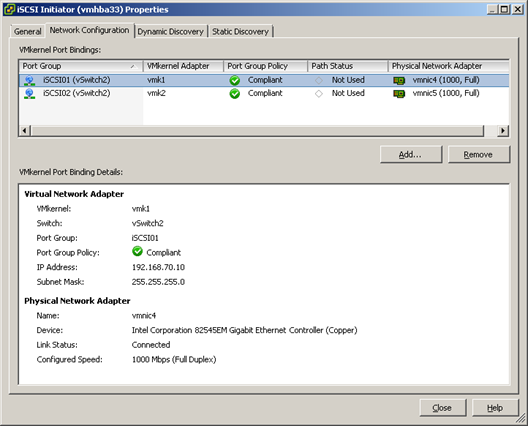

Overview:

| VMkernel Port Bindings | iSCSI01 |

| VMkernel Adapter | vmk1 |

| Port Group Policy | Compliant |

| Path Status | Not Used |

| Physical Network Adapter | VMNIC4 |

| Switch | vSwitch2 |

| IP Address | 192.168.70.10 |

| Subnet Mask | 255.255.255.0 |

| Configured Speed | 1000 Mbps (Full Duplex) |

| VMkernel Port Bindings | iSCSI02 |

| VMkernel Adapter | vmk2 |

| Port Group Policy | Compliant |

| Path Status | Not Used |

| Physical Network Adapter | VMNIC5 |

| Switch | vSwitch2 |

| IP Address | 192.168.70.11 |

| Subnet Mask | 255.255.255.0 |

Now you can add the storage by clicking the “Add storage wizard”

Nice job – This was going to be one of my first posts queued up on vSphere 5. You covered it nicely, including the most confusing or often forgotten part of the process to configure multipathing for swISCSI in vSphere 4.

Jas

Yes, nice post. The binding of pNICs to the iSCSI vKernel is gone. -:).

The last necessary CLI task when configuring iSCSI? … -:).

Best

Vladan

Think so 🙁 you can also set MTU size/Multipath etc. in this section.. it’s no rocket science anymore

You can set the MTU size via GUI as well?

Great!, no command line. It will be nice to get my hands on this soon

Yup, https://blog.vmpros.nl/2011/07/14/vmware-edit-mtu-settings-by-gui-in-vsphere-5-0/

Thanks. nice post!!

You have put vmk1 and vmk2 on the same subnet: 192.168.70.0/24; How the ESXi can route packets ? Thanks!

Does it finally have *TRUE* active-active iSCSI?

By that I mean will (or can) it use all active connections at the same time? And no, round-robin is not the same. Round-robin might boost read performance because it will issue commands on line 1, the starts issueing them on line 2, etc whilst data still comes in on 1. It doesn’t do shit for write though as the command will contain all the data to be written so only bandwidth of one line will be used at the same time (as somewhat opposed to read commands when hopping with say 3 commands).

Nice post!

regarding using cli, anyone knows the correct syntax. I used applicable esxi 4.1 cmds but don’t work.

thanks, nice guide. i learn things which i do not know…

Would there be any advantage to making the unused nic in each switch a Standby in case of failure of one nic?

You should try this software : http://slymsoft.com/autoconf-iscsi/

It’s an automating tool to create iSCSI configuration on ESXi 4 or 5 very easily.

Changing the MTU size to Jumbo Frame size (9000) in a multipathing solution on the Vmkernel port seems not to be working on ESXi 5.0. Leaving it to a MTU of 1500 works fine.

In a non mutlipathing invironment changing the VMkernel group to 9000 work also fine.

Strange behaviour.

Very nice post. No need to use CLI to do this. anymore, yeah! Very useful.

This was great!! Thank you so much for taking the time to write such a great post. I have been doing all my iSCSI work from the command line but with v5 I can do it all from the GUI! Very nice, very nice!

Point-click is for children. Real men do everything via a command-line because it can be automated. Do you want to point-click your way across a thousand hosts? thought so.

Got any info on the “new” CLI commands? I’ve got nowhere so far. Which is supremely aggravating.

Hi Matt, I agree and it must be automated of course 🙂

I’m writing a new script “Configure_iSCSI_network_vSphere5.ps1” it will be released in a few days, stay tuned!

Thanks for the post – its the clearest I’ve seen on Overriding switch fail-over order in the two VMkernels.

I followed it to the letter – only difference on my setup – direct IP no VLAN – I have two iSCSI Targets – vSphere Client finds all iSCSI targets but only allows one Active I/O even though there are two VMkernels – doesn’t show the available extra LUNs under “Add Storage” – didn’t have these issue under ESX3.5 – any clues re setting up two active links to two iSCSI Targets – what am I missing ?

In the past I’ve created a separate vSwitch for each vmknic-pnic pair. With all the explicit NIC settings this seemed more complicated. Is there a reason to do this as opposed to separate vSwitches?

@SafeTinspec,

I don’t think it really matters much whether 1 or 2 virtual switches are used. The important thing is the number of vmkernel port groups…as shown in the last screen shot. IMHO, easier using 2 virtual switches…but that’s just personal preference.

@SanderDaems

How do you configure round robin in ESXi 5? Is that something which needs to be configured via CLI?

I’ve written an article to configure Multipath with PowerCLI:

https://blog.vmpros.nl/2011/05/25/vmware-configure-multipath-policy-via-powercli/

@mkruger

Once a device is defined, right-click it and “manage paths” Change from “fixed” to “round robin”

On vSphere 4.x, various SAN vendors recommended changing the round robin I/O operation limit from 1000 (default) to 10 in order to more evenly balance the load. Anybody know if this setting has made it to GUI as well, or is it still only available via CLI?

Old 4.x CLI command to use was:

esxcli –server nmp roundrobin setconfig -d –iops 10 –type iops

dangit, formatting didn’t get my command correctly, correct command is:

esxcli –server ServerName nmp roundrobin setconfig –d DeviceIdentifyer –iops 10 –type iops

good job.. finally I found out

What have you and others found as the best way to verify that mpio is actually being used after it was configured properly

I have a doubt…

I want to have my D:\ drive on a VM (Exchange server) connected via ISCSI to my NETAPP.

Not a datastore on ESX!

I have to create a Vswitch for that?

Thanks a lot

Yes to use RDM’s in VM’s you can create 1 (prefer 2) vSwitches. Configuration: 1x vmkernel 1pNIC and 1 portgroup called ISCSI01 (for vSwitch2: ISCSI02), don’t configure RR.

Add (both) the network adapter(s) to the virtual machine and configure the iSCSI initiator.

Please check if there’s a MPIO driver from the storage vendor to configure the multipath correct.

Always read whitepapers for best practices PER vendor, here some links:

NetApp

https://communities.netapp.com/servlet/JiveServlet/previewBody/11657-102-1-22108/TR3749NetAppandVMwarevSphereStorageBestPracticesJUL10.pdf

Dell Equalogic

http://www.dellstorage.com/WorkArea/DownloadAsset.aspx?id=2412

HP LeftHand

http://www.vmware.com/files/pdf/techpaper/vmw-vsphere-p4000-lefthand-san-solutions.pdf

Nice post

I only have one question. I added all that is necessary for the ISCSI datastore.

But when i try to add the datastore I get the Error

“Call “HostDatastoreSystem.QueryVmfsDatastoreCreateOptions” for object “ha-datastoresystem” on ESXi “10.27.0.11” failed.”

The IScsi Target is a Software Iscsi server on Redhat 6.2 64. This is just for Testing purposes. As i need to have a test environment ready.

Regards Toine

Maybe this article will help you:

https://blog.vmpros.nl/2012/02/27/vmware-call-hostdatastoresystem-queryvmfsdatastorecreateoptions-for-object-ha-datastoresystem-on-esxi-servername-failed/

Dear Sander

Thank you. This was the issue. i saw the post already but they were talking about adding a new disk.

SO i skipped it, But now i used it, perfect thanx .

BTw wy is it that it works after this command.??

Regards toine

anyone get this vmware networking config to work right with Datacore SS-V iscsi san?

this is still a best practice, big thanks!!